Introduction

Generative AI refers to a class of artificial intelligence techniques designed to produce new content, data, or information that imitates existing examples. From generating realistic images and music to creating coherent text, generative AI is rapidly transforming various industries, including entertainment, marketing, healthcare, and more. The challenge lies in harnessing the power of these technologies while ensuring quality, ethical considerations, and practical applications.

In this article, we will explore the intricacies of generative AI, guiding you from basic concepts to advanced techniques. We will provide practical solutions with code examples, compare different models and frameworks, illustrate concepts with visual elements, and showcase real-world applications.

What is Generative AI?

Generative AI involves algorithms that can create new content based on the patterns learned from existing data. The primary types of generative models include:

- Generative Adversarial Networks (GANs): A framework where two neural networks, the generator and the discriminator, contest against each other to produce high-quality data.

- Variational Autoencoders (VAEs): A type of autoencoder that learns to represent data in a latent space, enabling the generation of new samples.

- Transformers: A model architecture that excels in sequence-to-sequence tasks, widely used in natural language processing.

Step-by-Step Technical Explanation

Understanding Generative Models

1. Generative Adversarial Networks (GANs)

GANs utilize two neural networks:

- Generator (G): Creates fake data.

- Discriminator (D): Evaluates the data and distinguishes between real and fake.

The training process involves:

- Initialize G and D with random weights.

- Train D: Feed it real data and fake data generated by G. Update D to maximize the probability of correctly identifying real and fake data.

- Train G: Update G to maximize the probability of D making a mistake, i.e., classifying the fake data as real.

Training Example in Python:

python

import tensorflow as tf

from tensorflow.keras import layers

def build_generator():

model = tf.keras.Sequential()

model.add(layers.Dense(256, activation=’relu’, input_dim=100))

model.add(layers.Dense(512, activation=’relu’))

model.add(layers.Dense(1024, activation=’relu’))

model.add(layers.Dense(28 28 1, activation=’tanh’))

model.add(layers.Reshape((28, 28, 1)))

return model

def build_discriminator():

model = tf.keras.Sequential()

model.add(layers.Flatten(input_shape=(28, 28, 1)))

model.add(layers.Dense(512, activation=’relu’))

model.add(layers.Dense(256, activation=’relu’))

model.add(layers.Dense(1, activation=’sigmoid’))

return model

2. Variational Autoencoders (VAEs)

VAEs consist of two components:

- Encoder: Compresses input data into a latent representation.

- Decoder: Reconstructs the data from the latent representation.

The VAE loss function includes a reconstruction loss and a Kullback-Leibler divergence term to ensure the latent space is smooth.

Training Example in Python:

python

import tensorflow as tf

from tensorflow.keras import layers

def build_vae(input_shape):

inputs = layers.Input(shape=input_shape)

x = layers.Flatten()(inputs)

x = layers.Dense(512, activation=’relu’)(x)

z_mean = layers.Dense(2)(x)

z_log_var = layers.Dense(2)(x)

def sampling(args):

z_mean, z_log_var = args

epsilon = tf.keras.backend.random_normal(shape=tf.shape(z_mean))

return z_mean + tf.exp(0.5 * z_log_var) * epsilon

z = layers.Lambda(sampling)([z_mean, z_log_var])

decoder_h = layers.Dense(512, activation='relu')(z)

outputs = layers.Dense(input_shape[0] * input_shape[1], activation='sigmoid')(decoder_h)

outputs = layers.Reshape(input_shape)(outputs)

vae = tf.keras.Model(inputs, outputs)

return vae3. Transformers

Transformers use self-attention mechanisms to weigh the importance of different parts of the input data. They have revolutionized natural language processing, enabling models like BERT and GPT.

Training Example in Python (using Hugging Face’s Transformers library):

python

from transformers import GPT2LMHeadModel, GPT2Tokenizer

model = GPT2LMHeadModel.from_pretrained(‘gpt2’)

tokenizer = GPT2Tokenizer.from_pretrained(‘gpt2’)

input_ids = tokenizer.encode(“Once upon a time”, return_tensors=’pt’)

output = model.generate(input_ids, max_length=50, num_return_sequences=1)

print(tokenizer.decode(output[0], skip_special_tokens=True))

Comparison of Generative Models

| Model Type | Strengths | Weaknesses | Use Cases |

|---|---|---|---|

| GANs | High-quality image and video generation | Difficult to train; mode collapse | Image synthesis, Style transfer |

| VAEs | Smooth latent space; good for reconstruction | Blurry outputs compared to GANs | Anomaly detection, Image generation |

| Transformers | State-of-the-art in NLP; flexible | Requires large datasets; high computational cost | Text generation, Translation |

Visual Representation of Generative Model Training

mermaid

flowchart TD

A[Start Training] –> B[Initialize Generator and Discriminator]

B –> C{Train Discriminator}

C –>|Real Data| D[Update D for Real Data]

C –>|Fake Data| E[Update D for Fake Data]

D –> F[Train Generator]

E –> F

F –> G[Evaluate Performance]

G –> H{Continue Training?}

H –>|Yes| C

H –>|No| I[End Training]

Real-World Case Studies

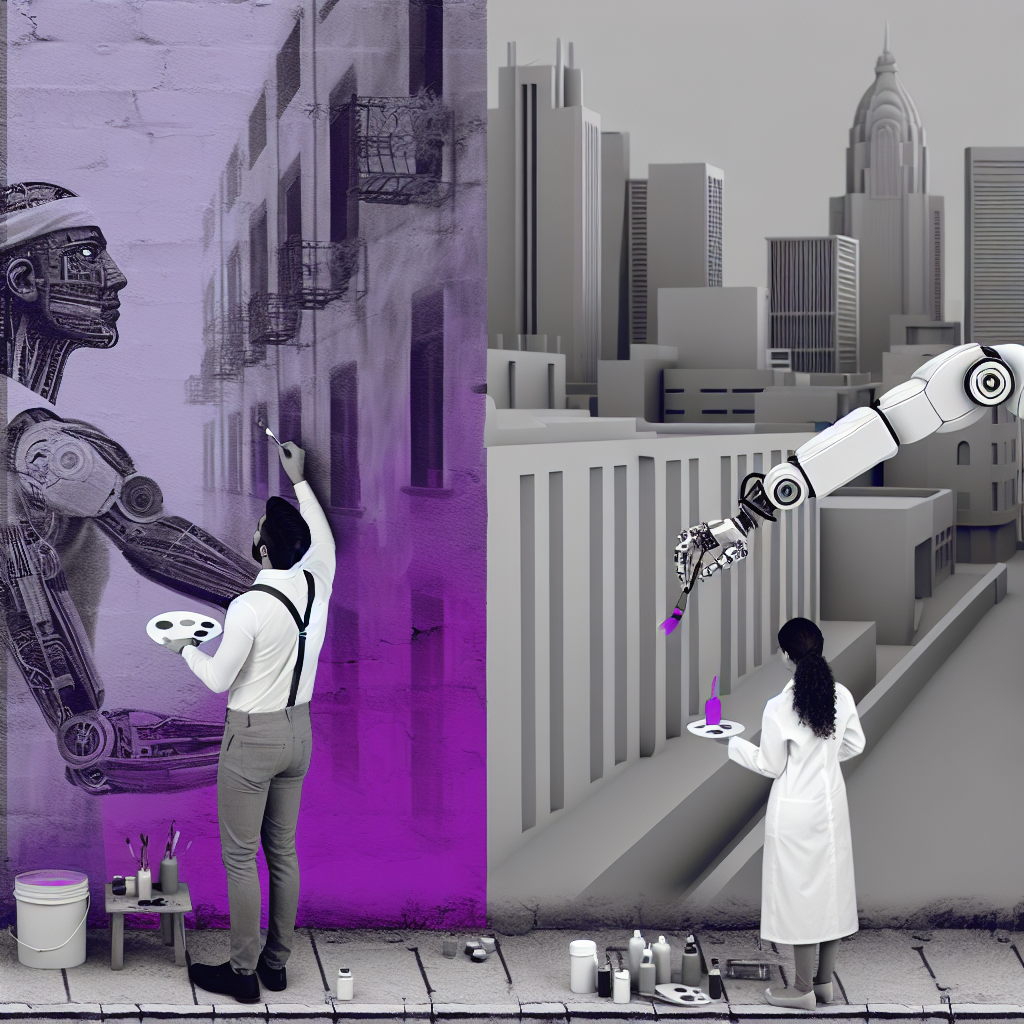

Case Study 1: Art Generation with GANs

Scenario: An artist wants to generate new artworks based on their style.

Solution: By training a GAN using a dataset of existing artworks, the generator can create new pieces that maintain the artist’s unique style. This can lead to new creative explorations and collaborations between human artists and AI.

Case Study 2: Text Generation for Marketing

Scenario: A marketing team aims to automate content creation for blog posts and social media.

Solution: Utilizing a transformer-based model like GPT-3, the team can generate relevant and engaging content quickly. This not only saves time but also allows for personalized marketing strategies based on consumer behavior.

Case Study 3: Medical Image Synthesis

Scenario: A healthcare organization aims to improve the training of diagnostic models with limited medical images.

Solution: By training a VAE on existing medical images, the organization can generate synthetic images that resemble real patient data. This can enhance the performance of diagnostic models, providing better patient outcomes.

Conclusion

Generative AI is a powerful tool that can significantly enhance creativity and efficiency across various sectors. As we have explored, different models such as GANs, VAEs, and Transformers offer unique advantages and applications.

Key Takeaways

- Choose the Right Model: Depending on your use case, select the appropriate generative model.

- Ethics and Bias: Be aware of ethical considerations and biases in the generated content.

- Experimentation is Key: Iterative testing and fine-tuning can lead to better results.

Best Practices

- Start with a well-curated dataset.

- Use established libraries and frameworks to save time.

- Monitor model performance and adapt as necessary.

Useful Resources

-

Libraries:

- TensorFlow (https://www.tensorflow.org/)

- PyTorch (https://pytorch.org/)

- Hugging Face Transformers (https://huggingface.co/transformers/)

-

Frameworks:

- GANLab (https://poloclub.github.io/ganlab/)

- OpenAI’s GPT-3 (https://beta.openai.com/)

-

Research Papers:

- “Generative Adversarial Nets” by Ian Goodfellow et al.

- “Auto-Encoding Variational Bayes” by D. P. Kingma and M. Welling

- “Attention is All You Need” by Ashish Vaswani et al.

By understanding and implementing the principles of generative AI, you can unlock new possibilities for innovation and efficiency in your projects.