Introduction

Computer Vision (CV) is a field of artificial intelligence that enables machines to interpret and understand the visual world. By using digital images from cameras and videos, CV allows computers to perform tasks that the human visual system can do, such as identifying objects, detecting faces, and recognizing patterns.

The challenge in computer vision lies in the sheer complexity of visual data. Images can vary significantly due to lighting conditions, angles, occlusions, and other variables. Consequently, developing robust models that can accurately analyze and interpret this visual data is a major hurdle.

In this article, we will explore the fundamentals of computer vision, discuss various approaches and models, provide practical code examples in Python, and analyze real-world applications. We will also compare different techniques, examine case studies, and highlight best practices in the field.

1. The Fundamentals of Computer Vision

1.1 What is Computer Vision?

Computer vision is a multidisciplinary field that combines elements of machine learning, image processing, and graphics to enable machines to perceive their surroundings. The goal is to automate tasks that require human visual intelligence, making it possible for computers to interpret and respond to visual information.

1.2 Key Areas in Computer Vision

- Image Classification: Assigning a label to an image based on its content.

- Object Detection: Identifying and locating objects within an image.

- Image Segmentation: Dividing an image into segments for easier analysis.

- Facial Recognition: Identifying individuals based on facial features.

- Optical Character Recognition (OCR): Converting images of text into machine-readable text.

2. Challenges in Computer Vision

Despite advancements in technology, several challenges remain in computer vision:

- Variability in Images: Differences in lighting, scale, rotation, and occlusion can significantly affect model performance.

- Data Annotation: Labeling images for supervised learning can be time-consuming and costly.

- Computational Resources: Training deep learning models requires significant computational power and memory.

- Real-time Processing: Many applications, such as autonomous driving, require real-time analysis.

3. Technical Approaches to Computer Vision

3.1 Traditional Image Processing Techniques

Before the rise of deep learning, traditional techniques such as edge detection, histogram equalization, and template matching were widely used. These methods are still relevant today, especially for simpler tasks.

Example: Edge Detection

Edge detection is a technique used to identify boundaries within images. The Canny Edge Detector is one of the most popular algorithms.

python

import cv2

import numpy as np

import matplotlib.pyplot as plt

image = cv2.imread(‘image.jpg’, cv2.IMREAD_GRAYSCALE)

edges = cv2.Canny(image, threshold1=100, threshold2=200)

plt.imshow(edges, cmap=’gray’)

plt.title(‘Canny Edge Detection’)

plt.axis(‘off’)

plt.show()

3.2 Deep Learning Approaches

Deep learning has revolutionized computer vision by enabling more accurate and efficient models. Convolutional Neural Networks (CNNs) are particularly effective for image-related tasks.

CNN Architecture

A typical CNN consists of:

- Convolutional Layers: Extract features from images.

- Activation Functions: Introduce non-linearity (e.g., ReLU).

- Pooling Layers: Reduce dimensionality and computational load.

- Fully Connected Layers: Classify the features into output classes.

3.3 Popular Deep Learning Models

- LeNet-5: One of the earliest CNN architectures designed for handwritten digit recognition.

- AlexNet: Introduced deeper networks and ReLU activations, winning the ImageNet competition in 2012.

- VGGNet: Known for its uniform architecture and deeper layers.

- ResNet: Introduced residual connections, enabling the training of very deep networks.

| Model | Year | Depth | Key Features |

|---|---|---|---|

| LeNet-5 | 1998 | 7 | First CNN, simple architecture |

| AlexNet | 2012 | 8 | ReLU activations, dropout |

| VGGNet | 2014 | 16-19 | Deep architecture, small filters |

| ResNet | 2015 | 50-152 | Residual connections, skip layers |

4. Practical Solutions with Code Examples

4.1 Implementing a Convolutional Neural Network

Let’s implement a simple CNN using Keras to classify the MNIST dataset.

python

import tensorflow as tf

from tensorflow.keras import layers, models

mnist = tf.keras.datasets.mnist

(x_train, y_train), (x_test, y_test) = mnist.load_data()

x_train = x_train.reshape((60000, 28, 28, 1)).astype(‘float32’) / 255

x_test = x_test.reshape((10000, 28, 28, 1)).astype(‘float32’) / 255

model = models.Sequential()

model.add(layers.Conv2D(32, (3, 3), activation=’relu’, input_shape=(28, 28, 1)))

model.add(layers.MaxPooling2D((2, 2)))

model.add(layers.Conv2D(64, (3, 3), activation=’relu’))

model.add(layers.MaxPooling2D((2, 2)))

model.add(layers.Conv2D(64, (3, 3), activation=’relu’))

model.add(layers.Flatten())

model.add(layers.Dense(64, activation=’relu’))

model.add(layers.Dense(10, activation=’softmax’))

model.compile(optimizer=’adam’, loss=’sparse_categorical_crossentropy’, metrics=[‘accuracy’])

model.fit(x_train, y_train, epochs=5)

test_loss, test_acc = model.evaluate(x_test, y_test)

print(f’Test accuracy: {test_acc}’)

4.2 Advanced Techniques: Transfer Learning

Transfer learning allows us to leverage pre-trained models to improve performance on specific tasks with limited data. For example, using VGG16 for image classification can significantly reduce training time and improve accuracy.

python

from tensorflow.keras.applications import VGG16

from tensorflow.keras.preprocessing.image import ImageDataGenerator

base_model = VGG16(weights=’imagenet’, include_top=False, input_shape=(224, 224, 3))

for layer in base_model.layers:

layer.trainable = False

model = models.Sequential()

model.add(base_model)

model.add(layers.Flatten())

model.add(layers.Dense(256, activation=’relu’))

model.add(layers.Dense(10, activation=’softmax’))

model.compile(optimizer=’adam’, loss=’sparse_categorical_crossentropy’, metrics=[‘accuracy’])

model.fit(train_generator, epochs=5)

4.3 Comparison of Approaches

| Approach | Pros | Cons |

|---|---|---|

| Traditional Methods | Simple, interpretable | Limited accuracy |

| CNNs | High accuracy, automated feature extraction | Requires large datasets and compute power |

| Transfer Learning | Faster training, improved performance | Dependent on quality of pre-trained model |

5. Case Studies

5.1 Autonomous Vehicles

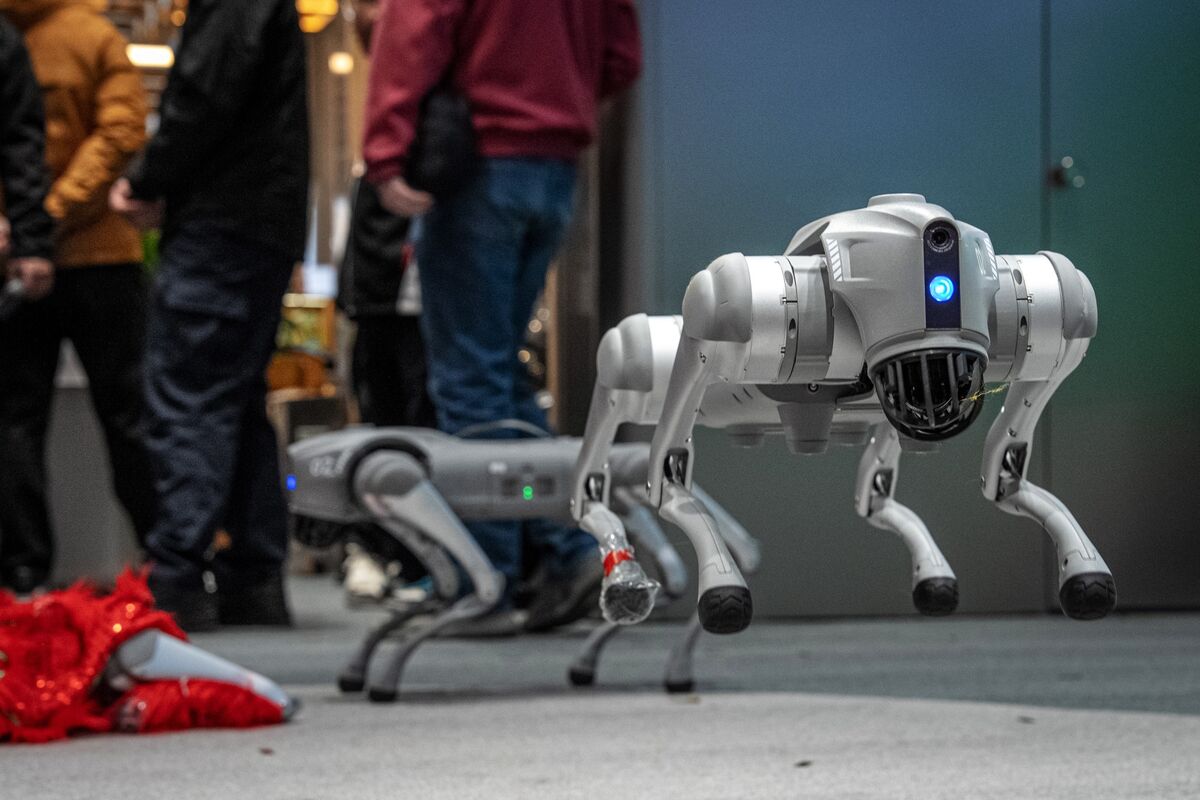

Autonomous vehicles rely heavily on computer vision for navigation and obstacle detection. Using a combination of cameras and LiDAR, these vehicles can detect pedestrians, other vehicles, and road signs.

5.2 Medical Imaging

In the healthcare sector, computer vision is transforming diagnostics. For example, CNNs can analyze medical images such as X-rays and MRIs to detect diseases like pneumonia or tumors.

5.3 Retail Analytics

Retailers use computer vision to track customer behavior in stores. By analyzing video footage, they can understand customer flow, optimize product placement, and enhance the shopping experience.

Conclusion

Computer vision is a rapidly evolving field with a multitude of applications across various industries. While challenges remain, advancements in deep learning and transfer learning are paving the way for more robust and efficient solutions.

Key Takeaways

- Understanding the Problem: Identifying the specific challenges in your computer vision task is crucial for choosing the right approach.

- Choosing the Right Model: Depending on the complexity and requirements of your application, select an appropriate model or algorithm.

- Utilizing Transfer Learning: When data is limited, leveraging pre-trained models can save time and improve performance.

- Continuous Learning: Stay updated with the latest research and advancements in the field to enhance your models.

Best Practices

- Always preprocess your data effectively.

- Experiment with different architectures and hyperparameters.

- Validate your models on unseen data to ensure robustness.

Useful Resources

-

Libraries:

-

Frameworks:

- Fastai

- Detectron2 (for object detection)

-

Research Papers:

- “ImageNet Classification with Deep Convolutional Neural Networks” – Alex Krizhevsky et al.

- “Deep Residual Learning for Image Recognition” – Kaiming He et al.

By embracing these principles and tools, practitioners in the field of computer vision can tackle increasingly complex challenges and contribute to groundbreaking advancements in technology.